YouTube Data Analysis Project

Introduction

While generative AI advancements by recent LLM models from companies like OpenAI and Meta showcase remarkable technological progress, it’s crucial to underscore the need for a balanced approach that merges technical proficiency with domain-specific insights. This project embodies this principle by attentively managing the quota limitations of the YouTube API and selecting and optimizing suitable combinations of machine learning and deep learning models for the Stacking Ensemble Classifier. Moreover, by leveraging interactive visualizations with Plotly and dashboards in PowerBI and Qlik Sense, the project enables us to derive meaningful insights into video content dynamics, which are practically applicable to improving video performance and audience engagement. Insights from the 2023 Kaggle AI Report and its best notebooks for best practices in ensemble modeling were instrumental in shaping the approach for this project. The link to the repository of codes and dashboards of this project is in this link in my GitHub. However, here we will delve into the problem and also show how I have tried to develop the project with different tools to make it a real-world solution.

Table of Contents

- Introduction

- Research Problem

- Data Collection

- Engagement Concept and Features

- Exploratory Data Analysis (EDA)

- Feature Selection

- Sentiment & Subjectivity Analysis

- Denoising Auto-Encoder (DAE)

- Stacking Ensemble Structure

- PowerBI and Qlik Sense Dashboards

- Results of Ensemble Method

- References and Further Reading

Research Problem

Imagine you’re a broadcaster or an MOOC developer looking to understand how “Educational” video streamers perform. You might want to analyze audience engagement across various topics, such as DIY projects versus Social Science discussions, and understand how video duration impacts viewer interaction. YouTube’s Analytics dashboard is robust but limited in scope, especially when it comes to analyzing competitors’ data. This project uses the Google YouTube Data API v3 to extract valuable insights, focusing on engagement rates, video duration, upload frequency, and audience sentiment.

Data Collection

Google YouTube Data API v3: To collect the necessary data, I utilized the Google YouTube Data API v3. This API allows access to comprehensive metadata for both videos and channels. However, using this API comes with several challenges:

- Quota Limits: The API has strict quota limits, restricting the amount of data that can be fetched within a given period.

- Data Accessibility: Some features and data points are not accessible due to API restrictions.

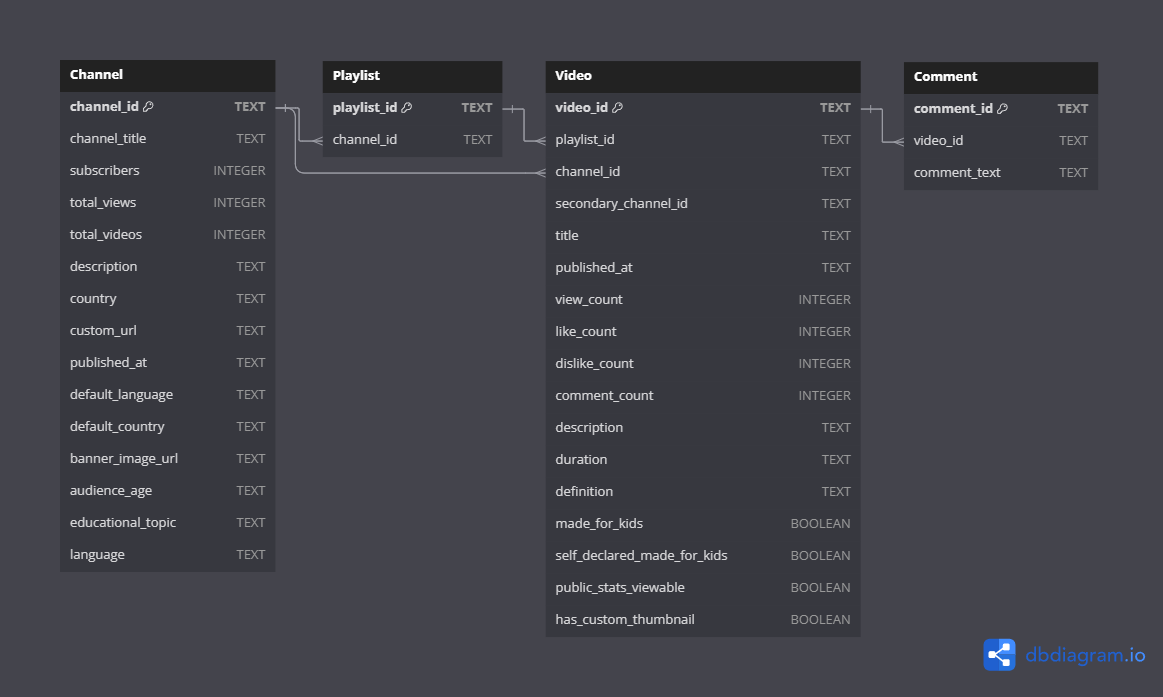

Error Handling and Quality Data Storage: Given the quota limits and potential errors when accessing the API, robust error handling was implemented to ensure data quality and integrity. Data was stored in an SQLite database for efficient access and management. Here’s an overview of the error handling and data storage strategy:

- Error Handling: Implemented retries and logging for API requests to handle temporary failures.

- Data Validation: Ensured that fetched data met quality standards before being stored.

- SQLite Database: Used SQLite for storing channel and video metadata, providing a lightweight yet powerful database solution.

Despite these measures, the limited quota posed a significant challenge. To mitigate this, I have reached out to the Google Research team for a researcher authentication, which, if granted, would allow for higher quota limits and better access to data.

Fig. 1: ERD of the YouTube database

Engagement Concept and Features

Engagement is a crucial metric for evaluating video content on YouTube, reflecting user interactions like views, likes, comments, and shares. High engagement indicates content that resonates well with the audience.

Engagement Formula:

\[ \text{Engagement Rate} = \frac{\text{Total Engagements (Likes + Comments + Shares)}}{\text{Total Views}} \times 100 \]

Features Collected for the Project:

- Channel Metadata:

- ID, Title, Subscribers, Total Views, Total Videos, Description, Country, URL, Published Date, Language, Country, Banner Image URL, Audience Age, Educational Topic

- Playlist Metadata:

- Playlist ID, Channel ID

- Video Metadata:

- ID, Title, Published Date, Channel ID, Playlist ID, View Count, Like Count, Dislike Count, Comment Count, Description, Duration, Definition, Made for Kids, Public Stats Viewable, Custom Thumbnail

- Comment Metadata:

- Comment ID, Video ID, Comment Text

- Sentiment and Subjectivity Analysis:

- Sentiment and Subjectivity of Video Title

- Sentiment and Subjectivity of Video Description

Exploratory Data Analysis (EDA)

Before interpreting the EDA results, it’s important to note that EDA is an observational study, showing associations that may involve lurking and confounding variables requiring further examination. The strategies suggested are based on a selective analysis of 95 channels within a subscriber range of 2 to 16 million.

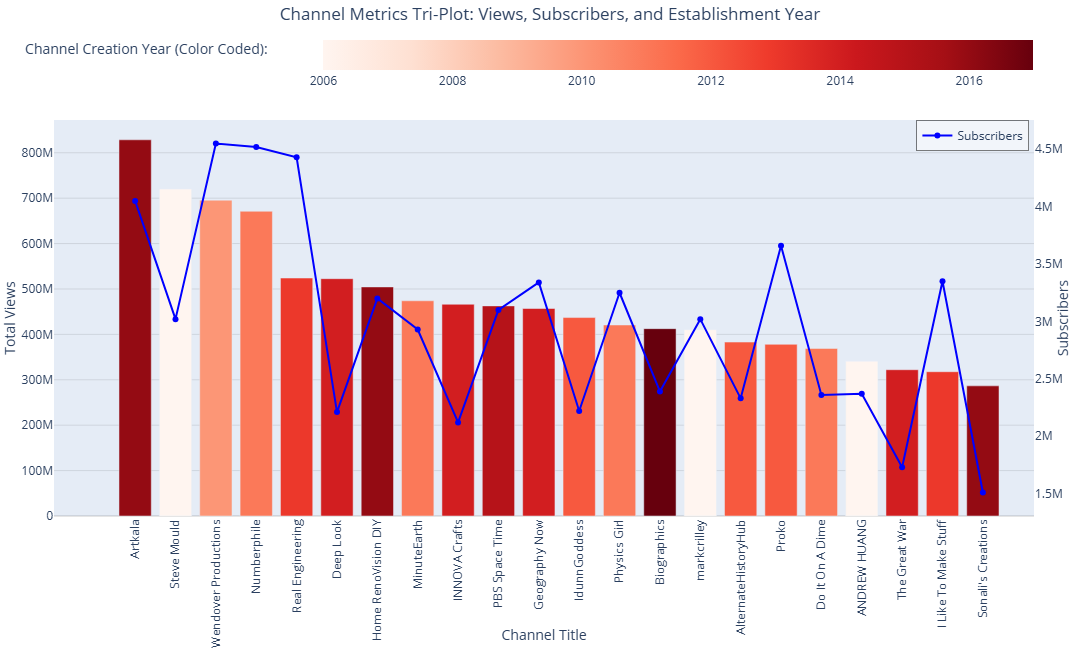

In this analysis, we explored YouTube channels focused on educational topics within a limited subscriber range to provide a fair comparison for channels at similar growth stages. Channels are ordered by view count to highlight trends more effectively.

Insights:

- Subscribers Distribution: Channels have subscribers ranging from 1.51 million to 4.55 million.

- Total Views Distribution: Views range from approximately 286 million to 829 million. The average total views are around 473 million, with a high standard deviation of 142 million, indicating diverse engagement levels.

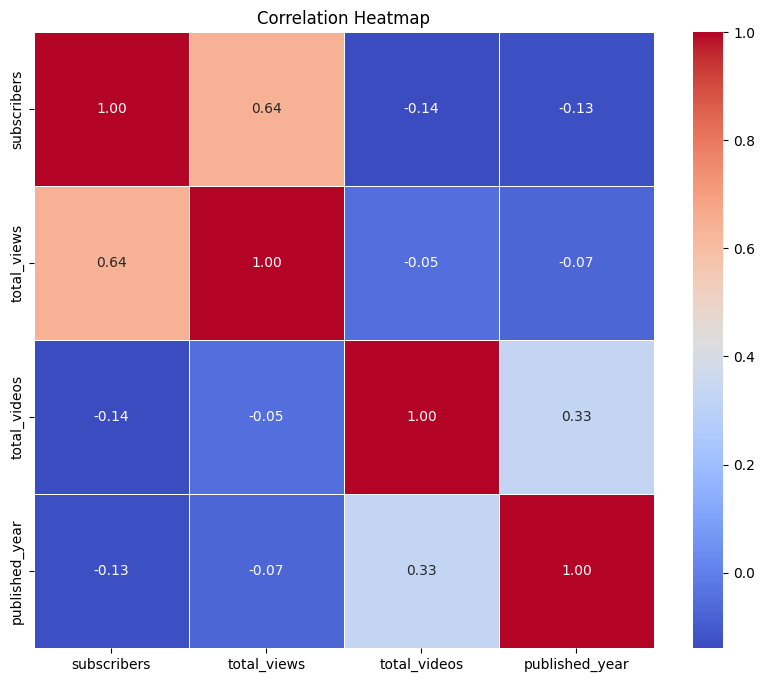

- Correlation Analysis: A moderate positive correlation ( 0.64) between subscribers and total views suggests that more subscribers generally lead to more views. The low correlation between total views and total videos (-0.05) shows that having more videos does not necessarily result in higher views.

- Publication Years: Most channels were created between 2010 and 2014, showing similar growth levels. Notable exceptions include newer channels achieving significant engagement.

Fig. 2: Channels metrics plot with total views, total subscribers, and creation year

Fig. 3: Correlation heatmap of the main factors of the channels

Notable Channels:

- Artkala: Leading in total views (828 million) and substantial subscribers (4.05 million), showcasing the appeal of DIY content despite being relatively new (2016).

- Biographics: The newest channel (2017) with 413 million views and 2.39 million subscribers, highlighting an effective content strategy in social sciences.

Conclusion:

Channels with higher subscribers generally have higher total views, but more videos do not necessarily correlate with higher view counts. Channels established earlier, such as those from 2006, show enduring popularity. Additionally, DIY channels in the top quarter for view count and subscribers demonstrate rapid growth, indicating the potential of DIY content. The correlation analysis suggests that focusing on quality and engagement of content is more effective than merely increasing the number of videos.

Feature Selection: Why Embedded Methods Are Suitable

Embedded methods integrate feature selection directly into the model training process. This approach is particularly advantageous for small datasets with a high feature-to-row ratio because:

- Efficiency: Embedded methods are generally more computationally efficient compared to wrapper methods, which involve training multiple models. This is crucial for small datasets where computational resources are limited.

- Performance: Embedded methods strike a balance between computational cost and model performance. They are designed to select features that contribute the most to the model’s predictive power.

- Overfitting: Embedded methods typically include regularization techniques that help reduce the risk of overfitting, which is a significant concern for small datasets like this project data.

Regularization Models like Ridge, Lasso, and Elastic Net can be used as embedded methods for feature selection. These models introduce a penalty term in the objective function during training to control the complexity of the model and encourage sparsity in the coefficient estimates. The resulting coefficients can then be used to assess the importance of features and perform feature selection.

Sentiment & Subjectivity Analysis with Relevance to Video Engagement

**Sentiment & Subjectivity Analysis:** Sentiment analysis is the process of determining the emotional tone behind a series of words, used to gain an understanding of the attitudes, opinions, and emotions expressed within an online mention. Subjectivity analysis, on the other hand, determines whether a piece of text is subjective (opinion-based) or objective (fact-based).

**Relevance to Video Engagement:** In the context of video engagement analysis, sentiment and subjectivity analysis can provide insights into how viewers feel about the content they are watching. For instance:

- Positive Sentiments: Videos with titles and descriptions that have a positive sentiment tend to attract more viewers and receive higher engagement rates (likes, shares, comments). Positive emotions can encourage viewers to engage more with the content.

- Negative Sentiments: Conversely, videos with negative sentiments might lead to lower engagement or provoke discussions and comments, depending on the nature of the content.

- Subjectivity: Subjective content, which expresses personal opinions or emotions, can create a more engaging experience by resonating with viewers on a personal level. Objective content, presenting factual information, might appeal to viewers seeking knowledge or information.

By analyzing the sentiment and subjectivity of video titles, descriptions, and in next phase even comments using TextBlob library, there are potentials to enhance viewer engagement. For instance, content creators might adjust the tone of their titles and descriptions regarding in which educational topic they are working to evoke a desired emotional response or foster a sense of community and discussion among viewers.

Denoising Auto-Encoder (DAE) for Representation Learning in Tabular Data

Auto-encoders have a long history and diverse applications, particularly for high-dimensional data such as images. These neural networks are designed to learn a compressed representation (encoding) of input data, typically for the purposes of dimensionality reduction or feature extraction. By reconstructing input data from its compressed form, auto-encoders can effectively identify and learn significant patterns in the data.

A denoising auto-encoder (DAE) is a specialized type of auto-encoder trained to remove noise from input data. The fundamental idea is to corrupt the input data with noise and train the auto-encoder to reconstruct the original, uncorrupted data. This approach helps the model learn more robust features and representations that are less sensitive to noise and variations in the data.

Fig. 4: Denoising Autoencoder with swap noise structure

While DAEs are widely used for image data, recent best practices have demonstrated their effectiveness for tabular data with high feature-to-row ratios, such as in this project. DAEs are particularly useful for feature extraction and dimensionality reduction in small datasets, helping capture important patterns and relationships within the data. In the case of unstructured data like images, text, or speech, there are numerous established tools to introduce noise. However, it is more challenging to add noise to structured tabular data because each variable may have a different range and distribution. For example, introducing Gaussian Noise to each variable requires determining an appropriate variance for each, which can be complex. Moreover, adding decimal noise to integer-only variables, such as counts, can distort the data.

Tailoring DAE for Tabular Data with Swap Noise and GaussRank Normalization:

During recent best practices, using swap noise and GaussRank Normalization for DAEs has significantly improved their performance for tabular data. These techniques help enhance data augmentation and normalization, making DAEs powerful tools for representation learning in tabular datasets. The combination of these techniques helps overcome the challenges associated with training neural networks on small datasets, improving model robustness and generalization.

Key Techniques:

1. Swap Noise:

- Swap involves randomly swapping feature values within the same class to create synthetic data. This method increases data variability and helps prevent overfitting, especially in small datasets where additional training samples can significantly enhance model performance.

- This type of noise substitutes existing values within the dataset, preventing the generation of out-of-place values. It is straightforward to use since it doesn’t require setting hyperparameters like mean or variance, and it is versatile across different variable distributions.

import numpy as np

def swap_noise(df, swap_prob=0.1):

df_copy = df.copy()

for col in df.columns:

mask = np.random.rand(len(df)) < swap_prob

df_copy.loc[mask, col] = np.random.permutation(df[col].values[mask])

return df_copy

X_augmented = swap_noise(pd.DataFrame(X_normalized, columns=X.columns)).values

Technical Insights:

Swap noise leverages the concept of data augmentation to artificially increase the size of the training dataset, which is crucial for improving model performance and generalization. By maintaining the class distribution and statistical properties of the original data, swap noise ensures that the synthetic samples are realistic and representative of the actual data distribution.

2. GaussRank Normalization:

Concept of GaussRank Normalization: GaussRank Normalization transforms features to follow a Gaussian distribution by ranking the data and applying the inverse Gaussian cumulative distribution function. This technique stabilizes the variance of features and improves the performance of algorithms that assume normally distributed data, such as neural networks.

In the context of DAEs, GaussRank Normalization helps neural networks learn more efficiently by normalizing ordinal variables into a Gaussian distribution, smoothing the optimization plane and improving training efficiency.

GaussRank Procedure:

- Compute ranks for each value in a given column using argsort.

- Normalize the ranks to range from -1 to 1.

- Apply the inverse error function (erfinv) to transform the normalized ranks

There is no comment. be first one!